AI Limitations and Challenges: What You Need to Know in 2025

Artificial Intelligence (AI) is no longer science fiction it’s embedded in our everyday lives, from the recommendations we see on streaming platforms to the navigation systems that guide our routes. But as dazzling as these advancements are, they come with significant caveats. That’s where the discussion around AI limitations and challenges becomes not only relevant but urgent.

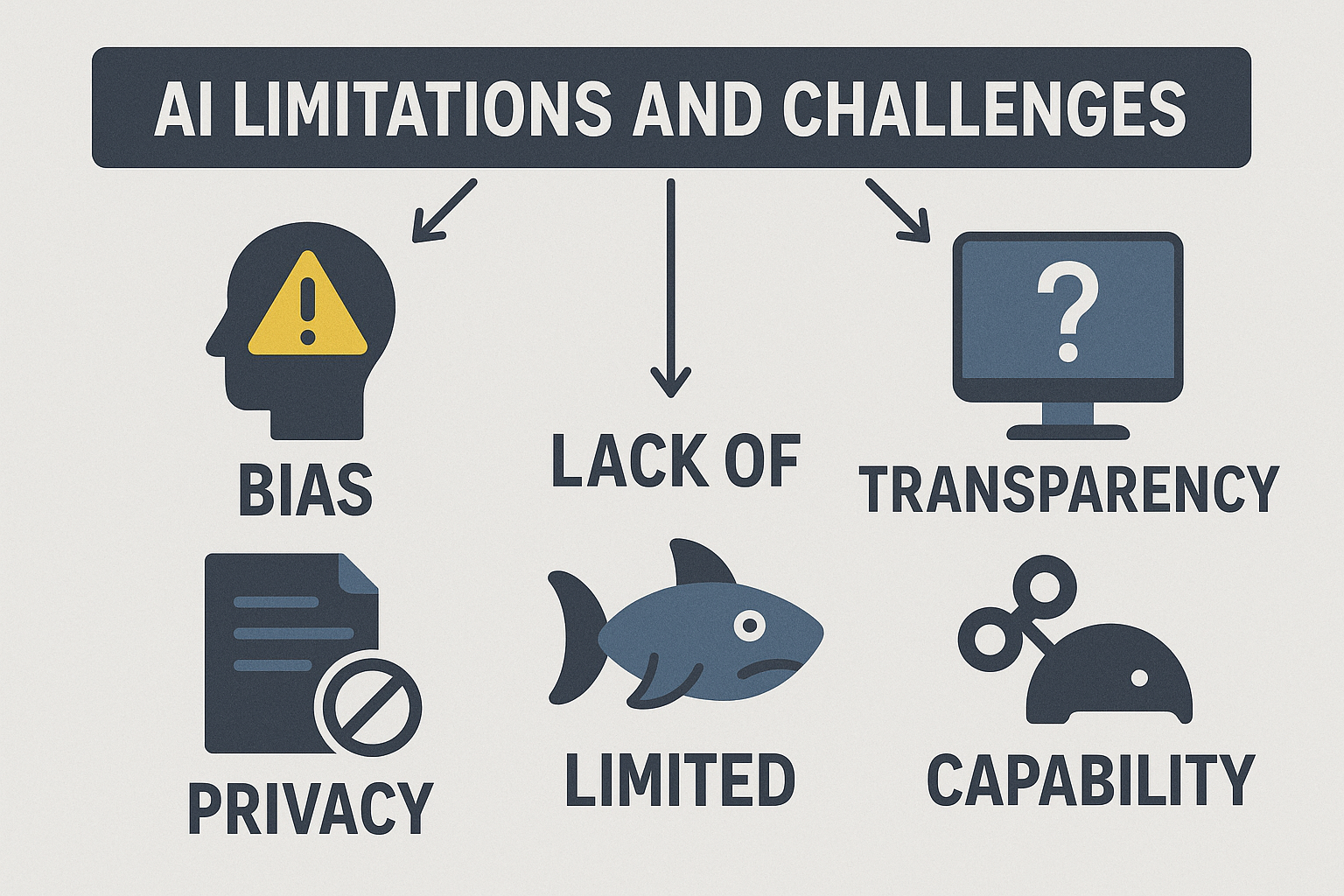

Thank you for reading this post, don't forget to subscribe!While AI promises speed, scale, and efficiency, it’s far from perfect. Behind the scenes, these systems still struggle with context, bias, transparency, and even basic human logic. If you’re fascinated by the rise of AI but skeptical about its real-world applications, you’re not alone. Understanding the limitations is just as important as celebrating the breakthroughs.

Let’s explore the real-world roadblocks AI still struggles with and what they mean for its future.

The Reality of AI: AI Limitations and Challenges in Understanding and Intelligence

We often say AI is “smart,” but that’s a stretch. In fact, most AI systems today, including large language models like ChatGPT, are pattern-recognition tools, not thinking entities. They don’t understand the world the way humans do.

Example: In one notable experiment, a chatbot confidently made up legal cases that didn’t exist during a court filing, because it thought that’s what should follow a user prompt.

Ultimately, AI mimics intelligence, but it doesn’t grasp meaning. It generates based on training data, not understanding. As a result, this makes it prone to hallucinations and inaccuracies especially in high-stakes scenarios.

Key Insight

- AI lacks comprehension and reasoning, leading to outputs that may seem correct but are fundamentally flawed.

Tackling AI Limitations and Challenges in Bias and Fairness

AI learns from data. However, human data is messy, biased, and historically flawed. That means AI often reflects and amplifies existing societal prejudices.

A study by MIT Media Lab found facial recognition algorithms had error rates of over 30% for darker-skinned women compared to less than 1% for lighter-skinned men.

Where AI Goes Wrong

- Resume screening tools penalizing candidates from certain demographics

- Credit scoring systems disproportionately affecting minority communities

Solutions Are In Progress

Consequently, companies are investing in fairness-aware AI and ethical auditing frameworks. However, until we fix the data pipeline, these types of limitations and challenges will persist.

Contextual and Common Sense: Still Missing in Action

Humans are great at understanding nuance. On the other hand, AI? Not so much.

Real-world fail: I once asked an AI tool to craft a birthday message for a friend who just lost their pet. The result? “Hope your day is full of tail-wagging joy!”

This disconnect highlights AI’s failure to process emotional and situational context. Therefore, without common sense or empathy, it falls short in social and sensitive settings.

When Context Matters Most

- Healthcare: Misinterpreting symptoms or tone in patient input

- Customer Service: Misreading anger, humor, or sarcasm

- Mental Health: Responding inappropriately to emotional disclosures

The Black Box of AI: AI Limitations and Challenges in Decision Making

Modern AI, especially deep learning, operates like a black box. In other words, it delivers decisions, but we often can’t explain how they were made.

For example, in finance, AI models might deny a loan without a clear rationale even to the developers who built them.

This lack of transparency poses major problems:

- Trust: Users can’t rely on opaque systems.

- Accountability: How do you contest an AI’s decision?

- Regulation: Policymakers struggle to audit or govern what they can’t explain.

Therefore, efforts like Explainable AI (XAI) aim to address this, but they’re still in development.

Environmental Costs: The Hidden AI Limitations and Challenges

Behind every AI-powered app or chatbot lies massive infrastructure:

| AI Model | Estimated Carbon Emissions (Training) |

|---|---|

| GPT-3 | 552 metric tons |

| BERT (Large) | 1,438 kWh (electricity) |

| OpenAI Codex | Unpublished, but presumed significant |

These systems require:

- Huge datasets

- Powerful GPUs/TPUs

- Global data centers

This makes AI resource hungry and environmentally taxing. Plus, only big tech players can afford to run such models, creating monopolies.

Key Takeaway

Therefore, AI scalability is constrained by its high demand for energy, data, and compute resources.

The Illusion of Creativity: AI Limitations and Challenges in Artistic Expression

AI can paint portraits, write poems, and compose music. However, it doesn’t create the way humans do.

Instead, it blends existing styles, imitates patterns, and optimizes prompts. It doesn’t feel, imagine, or draw from lived experience.

Try This: Ask an AI to write a love poem. It might sound pretty, but it won’t truly feel anything.

So while AI is a great co-pilot, it’s still not a replacement for human originality.

Misinformation and Malicious Use

AI tools can easily be turned into weapons:

- Deepfakes impersonate politicians or celebrities.

- Language models generate convincing fake news.

- Code generators can write malware or phishing scripts.

MIT Technology Review warns that synthetic media could erode trust in digital content.

This is not theoretical. In fact, it’s already happening. That means governments, companies, and platforms must act fast to regulate AI-generated content.

The Governance Struggle: AI Limitations and Challenges in Regulation

AI has outpaced regulation. Unfortunately, there are no universal rules for development, deployment, or accountability.

The EU AI Act is a good start, classifying systems by risk and demanding transparency. However, most countries are still scrambling to catch up.

Challenges for Policymakers

- Defining what counts as “harmful” AI use

- Balancing innovation with public safety

- Coordinating international standards

Until better governance is in place, these ongoing AI limitations and challenges will hinder progress.

Conclusion: A Call for Responsible Innovation

AI is powerful, but it’s not magic. Therefore, understanding its limits is crucial to shaping a better digital future. As users, developers, and leaders, we must:

- Stay informed about AI’s capabilities and boundaries

- Push for ethical, transparent, and inclusive design

- Advocate for policies that safeguard people over profits

Let’s embrace the potential of AI not with blind faith, but with clear vision and accountability.

🔁 Join the Conversation

💬 What excites or concerns you about the AI Limitations and Challenges?

👇 Share your thoughts in the comments below!

📬 Subscribe to our tech insights newsletter for weekly updates on AI, robotics, and the future of work.

🔗 Explore related reads:

Comments are closed